QCG Libraries and Cross-cluster communication

From a development perspective, the QosCosGrid applications were grouped into two classes: (a) Java applications taking advantage of ProActive library as the parallelization technology, and (b) applications based on ANSI C or similar codes, which rely on the message passing paradigm. Based on these groups, QosCosGrid was designed to support two parallel programming and execution environments, namely: QCG-OpenMPI (aiming at C/C++ and Fortran parallel applications developers) and QCG-ProActive (aiming at Java parallel application developers).

Additional services were required in order to support the spawning of parallel application processes on co-allocated computational resources. The main reason for this was that standard deployment methodologies used in OpenMPI and ProActive relied on either RSH/SSH or specific local queuing functionalities. Both are limited to single-cluster runs (e.g., the SSH-based deployment methods are problematic if at least one cluster has worker nodes that have private IP addresses). Those services are called the coordinators and are implemented as Web services.

Taking into account different existing cluster configurations, in general, we may distinguish the following two situations:

- a computing cluster with public IP addresses - both the front-end and the worker nodes have public IP addresses. Typically, a firewall is used to restrict the access to internal nodes.

- a computing cluster with private IP addresses - only the front-end machine is accessible from the Internet, all the worker nodes have private IP addresses. Typically, NAT is used to provide out-bound connectivity.

Those two different cluster configuration types influence inter-cluster communication techniques supported in QosCosGrid, called port range and proxy respectively.

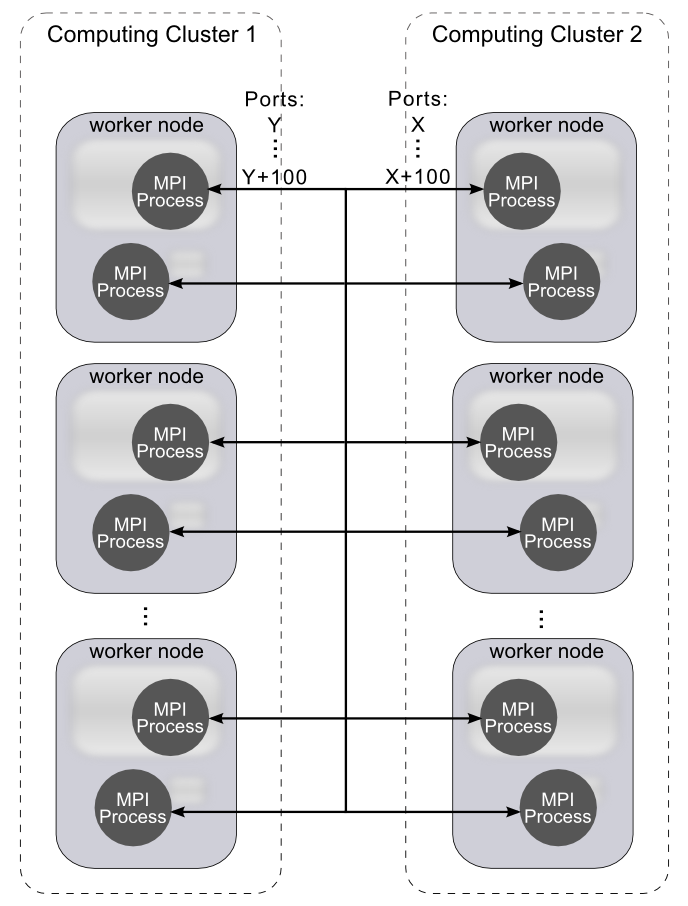

Port range technique

The Port Range technique is a simple approach that makes the deployment of parallel environments firewall friendly. Most of the existing parallel environments use random ports by default to listen for incoming TCP/IP traffic. This makes cross-domain application execution almost impossible as most of system administrators often forbid to open all inbound ports to the Internet due to security reasons. By forcing the parallel environments to use only predefined, unprivileged range of ports, it is much easier for administrators to configure the firewall in a way to allow incoming MPI and ProActive traffic without exposing critical system services to the Internet. Basing on the first presented figure, each of the site administrators has to choose a range of ports to be used (e.g. 5000-5100) for the parallel communication and configure the firewall appropriately. One should note that the port range technique solves the problem of the cross-cluster connectivity for computing clusters where all worker nodes have public IP addresses.

Proxy technique

In the second category of the clusters, where worker nodes have IP private addresses, the Port Range technique is not sufficient as all the worker nodes are not addressable from the outside networks. Therefore, in addition to the Port Range technique helping to separate incoming and outgoing traffics we adopted a new proxy technique. In our approach, additional SOCKS proxy services have to be deployed on front-end machines to route incoming traffic to the MPI and ProActive processes running inside clusters on local worker nodes. The new Proxy based technique is briefly presented on the second figure.

Attachments

-

QCG-ProxySOCKS.png

(174.7 KB) -

added by bartek 13 years ago.

(174.7 KB) -

added by bartek 13 years ago.

-

QCG-PortRange.png

(145.5 KB) -

added by bartek 13 years ago.

(145.5 KB) -

added by bartek 13 years ago.